Table of Contents

SRAM vs DRAM Explained

1. Why SRAM Is Faster but DRAM Is Better for Large Capacity

When people first learn about chip memory, one common question appears quickly: both SRAM and DRAM store data, so why are they so different in speed, area, and cost?

The short answer is simple. SRAM uses a more complex cell structure to achieve higher speed, while DRAM uses a smaller cell structure to reduce cost and increase density. This difference is the reason SRAM is usually used for cache, while DRAM is used for main memory in computers and many digital systems.

In chip design, memory is never chosen only by capacity. Engineers must balance speed, silicon area, power, and cost per bit. That is why SRAM and DRAM do not compete in exactly the same role. Instead, they work together inside a memory hierarchy.

1.What Is SRAM?

SRAM stands for Static Random Access Memory.

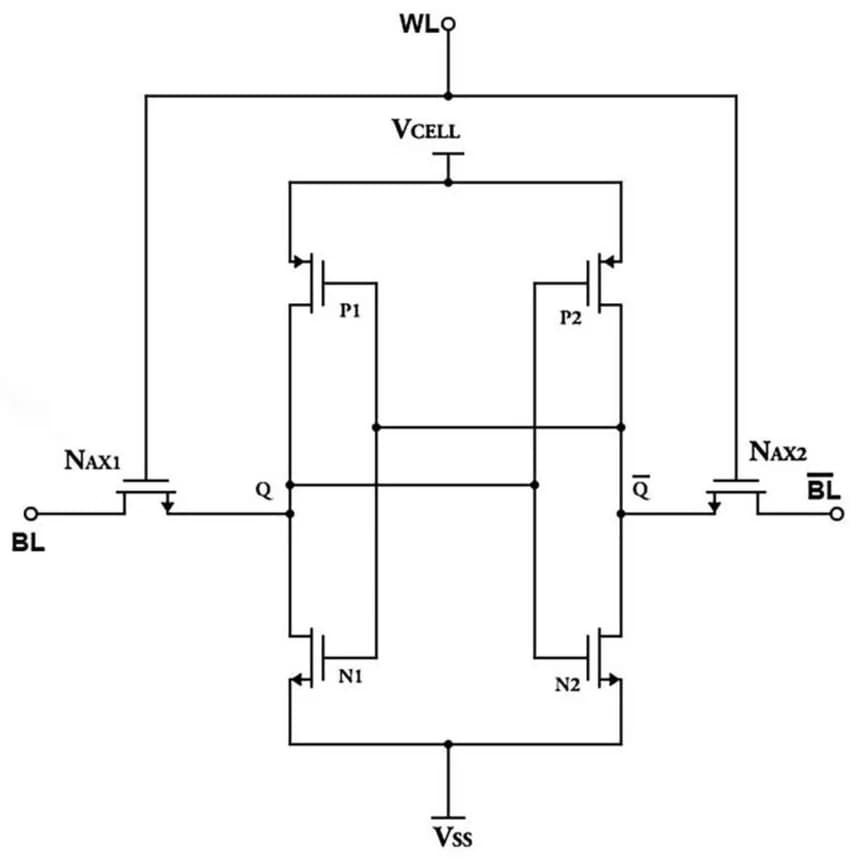

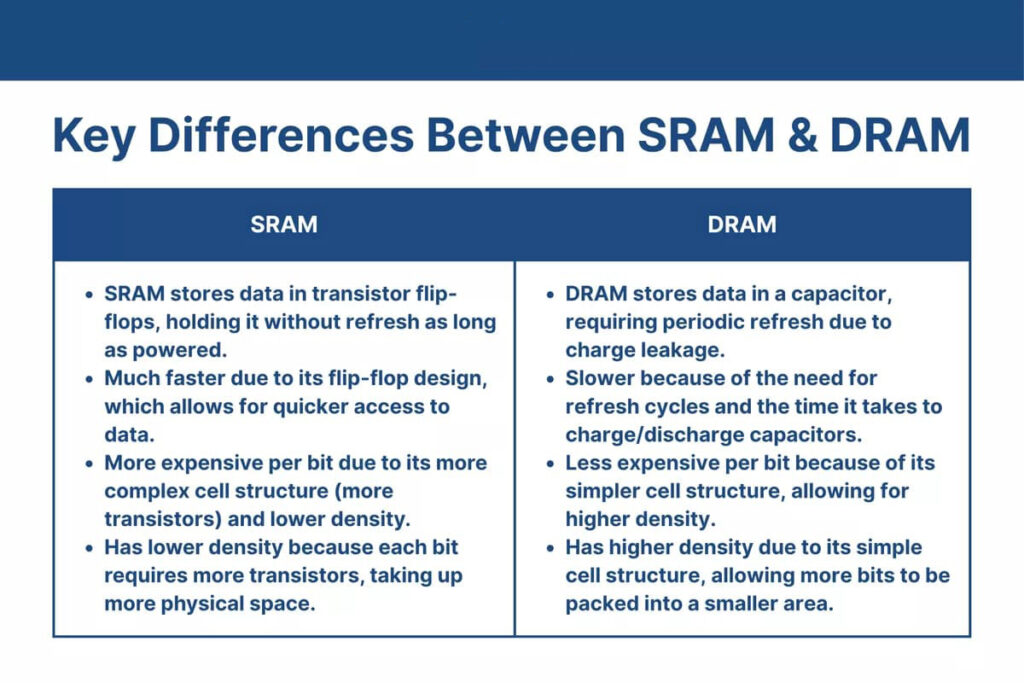

A typical SRAM cell is built with six transistors, often called a 6T cell. These transistors form a stable latch that can hold either a 0 or a 1 as long as power is present.

Because the data is stored in a stable feedback structure, SRAM does **not need refresh** during normal operation. This makes it very fast and very suitable for places where low latency matters most, such as **CPU cache, register files, and high-speed on-chip buffers**.

However, that speed comes at a price. A 6T cell takes much more silicon area than a DRAM cell, so SRAM is more expensive per bit and less suitable for very large memory arrays. That is the main reason it is used in smaller but faster memory blocks.

2.What Is DRAM?

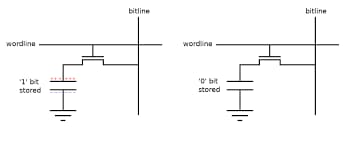

DRAM stands for Dynamic Random Access Memory. A basic DRAM cell usually uses one transistor and one capacitor. The capacitor stores charge, and that charge represents data.

This structure is much smaller than an SRAM cell, so DRAM can achieve much higher memory density. In practical terms, that means more bits can be placed in the same chip area, which reduces cost for large-capacity memory. That is why DRAM is widely used as main system memory rather than cache.

The trade-off is that the capacitor gradually loses charge over time, so DRAM must be refreshed periodically. This refresh requirement, together with its sensing behavior, makes DRAM slower than SRAM in latency-sensitive applications.

2.Why SRAM Is Faster Than DRAM

The speed difference comes from how data is stored and read.

SRAM stores data in a latch made of transistors. Once the state is established, it can be read quickly without waiting for tiny capacitor charges to be detected. DRAM, by contrast, depends on reading and restoring charge from a very small capacitor. That process is inherently less direct and more timing-sensitive.

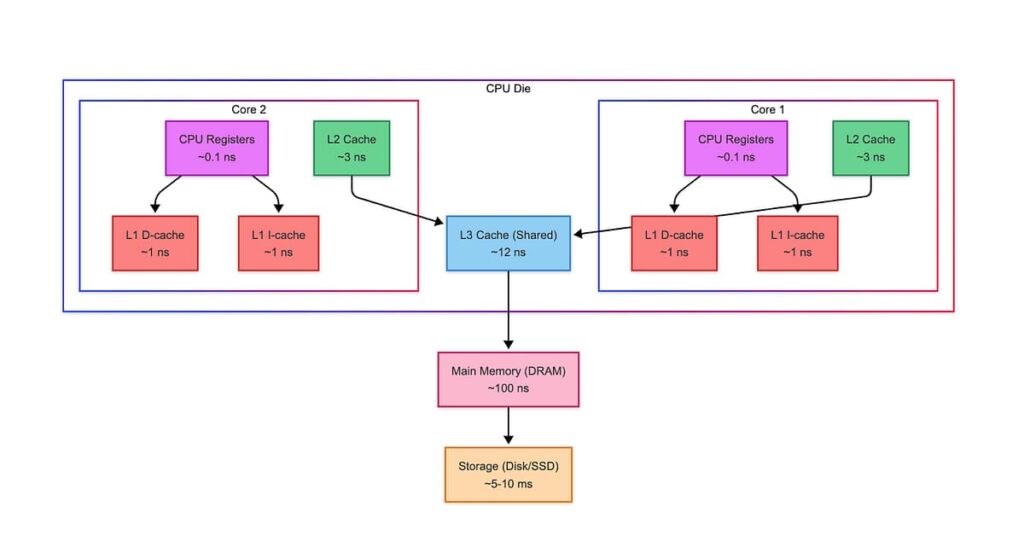

Because of this, SRAM is the preferred choice for L1, L2, and often L3 cache, where the processor needs data with very low delay. DRAM is still fast in absolute terms, but compared with SRAM, it sits lower in the memory hierarchy.

3.Why DRAM Is Smaller and Cheaper

The reason is structural: DRAM uses a simpler memory cell.

A smaller cell means:

higher density

lower cost per bit

better fit for large-capacity memory

This is why computers do not use SRAM for the entire system memory. If large-capacity memory were built entirely from SRAM, the required die area and cost would become impractical. DRAM offers a much better balance when the goal is to store gigabytes of data.

4.Why CPUs Use SRAM Cache but External DRAM Memory

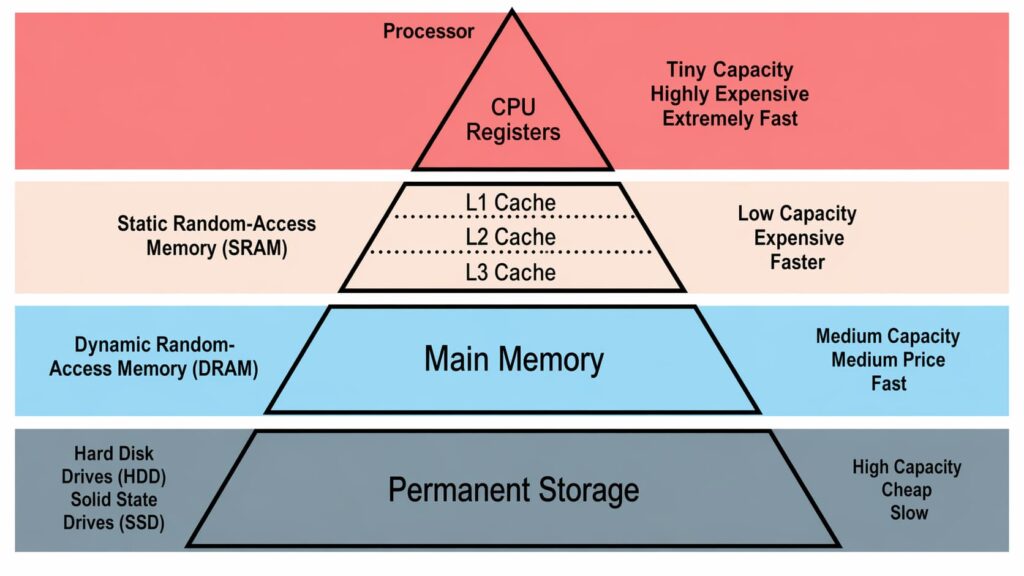

Modern processors rely on a memory hierarch. At the top are the fastest and smallest memory resources, such as registers and SRAM cache. Lower in the hierarchy sits DRAM, which is larger but slower. This layered structure exists because no single memory technology can optimize speed, size, and cost at the same time.

That is why CPUs usually keep only limited SRAM on-chip for cache, while placing much larger DRAM off-chip as main memory. The processor uses SRAM to reduce waiting time for frequently accessed data, and DRAM to provide the large working memory that software actually needs.

5.SRAM vs DRAM in One Simple Comparison

If you want to remember the difference quickly, use this logic:

SRAM = faster, larger cell, higher cost, used for cache

DRAM = slower, smaller cell, lower cost, used for main memory

That basic trade-off is one of the most important ideas in computer architecture. It explains not only memory choice, but also why chip floorplans and processor designs look the way they do.

6.Final Takeaway

SRAM and DRAM are both essential, but they solve different problems.

SRAM is designed for **speed**.

DRAM is designed for **capacity and cost efficiency**.

In real systems, they are not alternatives in a simple either-or sense. They are two layers of the same performance strategy: use small, fast SRAM close to the processor**, and use **large, economical DRAM for bulk storage during computation. That is the foundation of modern memory hierarchy design.

Alice lee

Business Manager

Focused on the electronic components sector, the author shares industry knowledge, product insights, and sourcing perspectives related to modern electronics manufacturing. With close attention to market trends, component applications, and supply chain developments, the content is designed to support engineers, buyers, and businesses in making more informed decisions.